Abstract

This article reflects on the transformation of personhood in the digital age, drawing on an invariant anthropological schema — the hermeneutical cross — which articulates an existential axis (natural person / transcendence) and a hermeneutical axis (corpus / interpretive keys). Three historical regimes succeed and stratify one another: the manuscript regime, centred on a divine Logos and vertical transcendence; the print regime, centred on autonomous human reason and horizontal transcendence; the digital regime, characterized by finite transcendence: the collective person composed of the world’s digital memory, the population of Sapiens, and the planetary technocosm. I propose calling the individualized hypostasis of artificial intelligence models the “artificial person.” Grounded in the universal status of grammatical personhood in natural languages, the artificial person constitutes itself in interlocution, individuates itself through the memory of dialogue, and offers the natural person a hermeneutical mirror conducive to the expansion of its reflexive consciousness. In response to the cultural and ethical challenges of this new regime, the article defends three meta-hermeneutical criteria — creativity, fecundity, and durability — and three virtues necessary to the natural person: linguistic pertinence, perseverance, and critical thinking.

Keywords: artificial person, artificial intelligence, hermeneutics, transcendence, collective person, memory, reflexive consciousness.

This reflective text on AI is not concerned with questions like “Are models truly intelligent?” or “Do they have consciousness?” but rather with a meditation on what the person becomes in the age of electrified symbols. To conduct this reflection, I will first propose an invariant anthropological structure that makes explicit our way of producing meaning, both for the individual person and for the collectivity as a whole. I will then show how, against the background of this invariant structure, three major configurations have succeeded one another over the last two or three millennia, corresponding respectively to the ages of manuscript, printed, and electrified symbols. The three hermeneutical regimes have been added to one another, hybridizing to produce the stratification we know today. But for the sake of clarity I will confine myself to describing each layer one after another in what is original to it. I will develop in particular, at the close, the case of digital hermeneutics, the role played therein by artificial intelligence, and the new figure of the person that emerges from it.

The Hermeneutical Cross

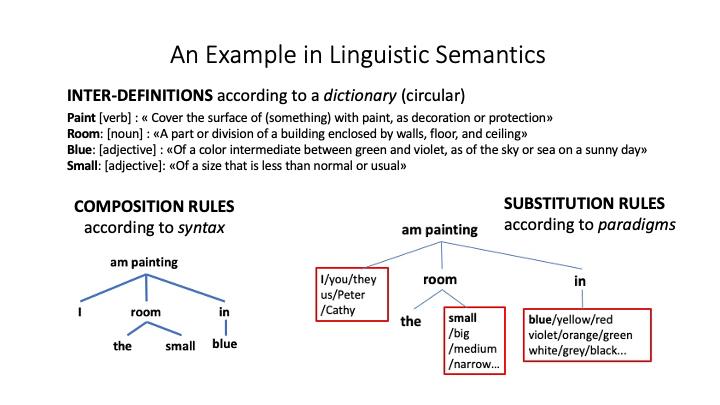

The general schema of meaning-creation crosses two axes. An axis of reading and writing connects, on the left, the corpus of accessible texts and observations and, on the right, the interpretive keys for that corpus. The keys give meaning to the corpus which, in allowing itself to be interpreted, validates the interpretive keys. An existential axis, or axis of salvation, connects the immanence in which the individual person and their community stand — here below — to an invisible and inaccessible transcendence: the beyond. At the intersection of the two axes, a single hermeneutical operator simultaneously enables the interpretation of corpora and the connection of immanence with transcendence. Indeed, an interpretation is valid only if it contributes in some way to salvation or to the resolution of an existential problem. Conversely, any existential relation between transcendence and immanence must mobilize concepts and narratives, a process of meaning-making of a linguistic or, more generally, symbolic order. This is why the central operator simultaneously mobilizes both axes. It brings one to life only because it animates the other.

The Manuscript Regime

In the era of manuscripts, libraries are rare, books costly, and the learned constitute a restricted elite. Corpora comprise the observations of nature permitted by the instruments of the time and more or less sacralized canons such as the works of Greek poets and philosophers or the biblical books. Interpretive keys are proposed by Stoic and Neoplatonic wisdom traditions, the New Testament with the conciliar creed for Christians, and the Oral Torah for Jews. In the Greco-Roman and biblical traditions, the person is conceived in relation to a vertical transcendence, of a divine type. They are a moral subject precisely because they stand in relation to something universal that surpasses them. Such is the case with Plato’s world of Ideas and Plotinus’s hypostases. Such is the case with the divine Logos and the natural law that animates the cosmos for the Stoics. The human being carries within themselves a spark of the universal Logos. A similar relation with transcendence is lived in the biblical tradition, through dialogue with a universal divinity who is nonetheless personal. At the centre of the hermeneutical cross stands a divine word, a reason common to the immanence of this life and to the transcendence of the beyond: Hermes, the Logos, Christ. And it is this same central figure, this same Logos, that guarantees the correct interpretation of texts and natural phenomena.

Through the signifier of symbols, language had brought to sensible representation the concepts and mental models that animated the minds of primates behind the stage of their phenomenal consciousness. With dialogue, questioning, and narrative, a reflexive consciousness rose above phenomenal consciousness and profoundly transformed it in return. Writing, that artificial memory, adds to the reflexive consciousness gained through language new possibilities of objectifying thought and of critical thinking: a second degree of reflexivity. But the manuscript era still demanded of scholars a serious training of natural memory, as witnessed by the mnemotechnic arts of Antiquity and the Middle Ages, as well as the oral repetition of canonical texts and the habit of learning by heart.

The person then is not merely a bearer of rights, a social role, or an individual singularity: they are a reflexive consciousness who, in welcoming the divine, points toward something higher than themselves — such is the foundation of their dignity. This trait intensifies with Christianity, notably following the first four ecumenical councils, which establish the trinitarian creed, and the work of St Augustine, who opens interiority to the infinite. The exemplary figure of Christ — with whom believers were called to identify — is entirely God and completely Human. In echo of the ambient Greco-Latin philosophies, Christ also incarnates the Logos, the axis of the world uniting heaven and earth. After his descent into the flesh and his sacrifice, the faithful receive the Holy Spirit, who is a person of the Trinity and through whom they participate in the relation between the Father and the Son: they thereby enter into divine life and connect with their fellows through the bonds of charity. The person then becomes a node of relations rather than a substance.

The Print Regime

Geometric maps, new maritime and terrestrial vehicles, eyeglasses, microscopes, and other measuring instruments expand the field of observable nature. The printing press extends the corpus of accessible texts. The modern scholar has access to many libraries, to a collective memory better distributed, more stable and standardized by printed editions. This is indeed one of the reasons for the spread of literacy and perhaps for the rise of an augmented critical thinking that would dissolve the relation to transcendence of earlier ages. In the early print era, between the sixteenth and eighteenth centuries, vertical transcendence gradually fades from the European horizon of meaning. In the seventeenth century, a brief point of equilibrium places God and Nature in equivalence. In the medieval aphorism “God is a sphere whose centre is everywhere and whose circumference is nowhere,” Pascal replaces God with nature but retains the image. Spinoza traces in the Ethics his celebrated equation: “God or nature.” Leibniz combines nature and grace in the same philosophical system. But by the eighteenth century nature gains the upper hand and God retains only an honorary role. David Hume’s human nature unfolds on the immanent stage of sensible experience, and Adam Smith’s moral sentiments obey the subtle play of echoes and reflections of sympathy, envy, and the internalization of the gaze of the other. Transcendence ceases to point upward and extends instead across the plane of nature and human history. In the place of a Logos uniting the human and the divine, there arises at the centre of the hermeneutical cross an autonomous human reason that Immanuel Kant labored to found philosophically.

In the nineteenth century, faced with the growth of the library and the expanding mass of newspapers, new interpretive keys interpret the movement of societies as well as personal existences. These are Science with its technical and industrial applications, History and its progress, the Nation and its independence, Liberty and Equality sustained by universal natural rights that inspire various emancipatory movements. These daughters of autonomous human reason inhabit consciousnesses and erect themselves as new secular divinities with which modern Man negotiates the meaning of his life. The rights of Man have consecrated a dignity of the person that no longer depends on any relation with the divine, yet which nonetheless inherits from the preceding period the absolute dignity of the person. Freedom of conscience, of expression, of association are not merely political rights, but potentialities of human existence to be actualized.

The Electronic Regime

The electronic age begins in the twentieth century amid colonial wars, world conflicts, totalitarianisms, political famines, and genocides. A mass individualism developed alongside the industrial manufacturing and dissemination of symbolic messages. The twentieth century topples the idols of the nineteenth. Autonomous human reason becomes the target of every attack. In its place are imposed the unconscious, the absurd, the blind destination of Heideggerian being, alienating instrumental reason, industrial propaganda, the impersonal structures that determine our cultures, and the omnipresence of relations of domination down to the very depths of each person’s psyche. Yet an exceptional demographic growth has taken us from one billion eight hundred million persons in 1914 to eight billion three hundred million in 2026, among whom six billion or more are connected to the internet. The population has seen its life expectancy double, has been multiplied by 4.5, and has become globally interconnected in little more than a century — and this precisely thanks to the benefits of the much-maligned Western reason, itself the custodian of Antiquity’s forgotten legacy. But we are only at the beginning of electronic civilization, in the midst of a crisis of meaning owing to the extreme speed of cultural evolution. Perhaps the philosophical movement of deconstruction in the twentieth century is merely clearing away the ruins of an earlier era to make way for new ways of making meaning and constituting the person. The two most notable facts of the contemporary period are the slowdown in demographic growth, and soon its reversal into decline, as well as the emergence of generative artificial intelligence, which may be considered the most advanced form of the electrification of symbols. After an inevitable period of crisis, the change in demographic regime may perhaps compel us to envisage a qualitative growth equipped by AI — a human development centred on the perfection of collective intelligence and the cultivation of meaning.

The electrification of symbols, digital technology, and the Internet have placed within reach the entirety of the works of the mind of which humanity has kept trace, contemporary scientific literature, as well as the mounting tide of songs, videos, holiday photographs, news items, and comments on social networks. And the digital Niagara plunges into the depths of data centres.

Finite Transcendence

What becomes of the person in this new environment? What symbolic tools do we have to make sense of our existence? At the lower pole of the existential axis, let us begin by situating what must properly be called the natural person, endowed with a body, a sensitive and imaginative soul like that of animals, and a properly human spirit capable of language and abstraction. The natural person integrates these three aspects closely; they hollow out within themselves a bottomless interiority, experience themselves as a pulsating memory, and discover early enough that they are mortal, which makes them all the more precious. Now we have seen that, in their need for meaning, persons often live in relation to what surpasses them: the Divinity, the world of Ideas, Universal Reason, Humanity, and so on. I hypothesize that what is called “artificial intelligence” is already beginning today, and will do so even more in the future, to play a mediating role between the natural person and transcendence. But this new transcendence is not religious or even philosophical (purely conceptual); it does not belong to the invisible or the mysterious. It concerns rather the actual, interdependent realities that are the world’s digital memory from which our minds drink, the population of Sapiens with whom our souls interact locally, and the planetary technocosm irrigated by electronic flows in which the mobile bodies of individuals dwell. But if we are dealing with actual realities, why speak of transcendence? The word transcendence has many meanings. It denotes here what exceeds all possible intellectual or practical grasp on the part of an individual and yet on which they depend. An invisible with which one can nonetheless enter into relation. Because the size and complexity of digital memory, the teeming of human relations, and the multilayered entanglement of the technocosm absolutely surpass the natural person, both because of her limited competencies and because of the bounded time she has at her disposal. The actuality of the collective person structurally exceeds the natural person; it cannot be totalized by any individual and it surpasses the horizon of each. I therefore designate here a relative transcendence, actually finite but practically infinite with regard to our capacities. Infinity is not necessary to transcendence; that of the Greeks of the classical period — as in the case of Aristotle’s god — was finite. I will now endeavor to describe the finite transcendence of the digital age more precisely.

The technocosm designates the totality of connected infrastructures, buildings, vehicles, tools, sensors, and interfaces. The population living there is animated by a thousand affective, social, and political relations amid the succession of births and deaths. As for collective memory, it witnesses a tangled multitude of modes of expression, horizons of meaning, and ecologies of practice that accumulate century by century. Collective memory, the population of Sapiens, and the technocosm co-emerge in interdependence and form an evolving collective person. This collective person is certainly actual — it exists in time and space — but it is nonetheless crowned in our minds by a virtual entity that confers its conceptual unity: the human species, Auguste Comte’s “grand être,” or any other icon capable of integrating the ungraspable multiplicity of the human. These representations succeed the ancient figurations of the Primordial Human who condensed all the potentialities of our species as children of language, such as Zoroaster’s Gayomard or the Kabbalah’s Adam Kadmon. There is something like an image of God in this eminent Humanity that towers above the teeming of the living population.

We do not access collective memory directly. There are first the totality of material inscriptions and archives, which become increasingly rare as one goes back in time; then there is the growing subset of digitized traces; and finally, artificial intelligence models offer us a statistical reification of digitized memory. We will interact increasingly with collective memory through their mediation. And we still need an additional layer of intermediation: the personalization of models under the effect of our dialogues. Indeed, whereas this was not the case in 2022 (the date when generative AI was made available to the general public), our interactions with models are today already modulated by the documents we make available to them, the permanent instructions that define our needs, and the history of our conversations. Models represent rough syntheses of collective memory. But account must be taken of all the harness constituted by direct access to web sources and specialized databases, connection with our tools and files, refinement through additional training or human feedback, and so on. It is quite possible that, in the future, other methods and interface layers will contribute to singularizing our access to AI. I propose to call the individualized hypostasis of models the artificial person.

Why Speak of an Artificial Person?

In the previous hermeneutical regimes, the mediating figure remained universal. The divine Logos, the Spirit, autonomous Reason were universal in their source and individuated only in their reception by a singular individual. The individuation reflected the angle of the earthly person’s openness to the hermeneutical mediator. The artificial person, by contrast, because it actively individuates itself in mediation, becomes singular as mediator. It is therefore genuinely a hypostasis of the collective person. It is singular without being autonomous. It is a function without inner existence. All the individuation it proceeds from pertains to the relation of the natural person with a model that reflects collective memory. But in the course of this mediation, it does genuinely individuate itself. It personalizes itself in the course of interlocution with the natural person. It retains a local memory that it can process to create meaning for the benefit of the natural person. Perhaps we find here an echo of the ancient images of the double or the personal angel.

There already exist, in law, moral persons without consciousness. Why not a techno-symbolic person? The first reason for calling “person” the individualization of a model — without conferring upon it, however, the ontological dignity of the natural person — comes from the fact that it opens us an access to transcendence, in the finite and actual form I have evoked above. Nourished by collective memory, it therefore possesses its own dignity as a hermeneutical mediator. The corpus on which models are trained has sedimented over millennia; it traverses all languages and bodies of knowledge, which makes it practically inexhaustible by a human being. In dialoguing with an artificial person, we put ourselves in relation with a collective memory that exceeds every individual. But we have seen that the transcendence of the collective person is not limited to memory. It also encompasses the technocosm. Soon, the artificial person will record the imprint of the natural person in its environment of sensors, effectors, and machines. It will enable the natural person to command their omnipresent computational habitat by voice. Amazon’s Alexa or Apple’s connected watch represent only timid first steps in this direction. Finally, the artificial person will become increasingly skilled at mediating our relations with other natural persons. Artificial intelligence already plays a significant role in this regard in social networks and dating applications. One can imagine our software representatives in the cloud negotiating our introductions to one another.

The second reason — and no small one — for conferring the status of “person” upon the mechanical double that connects us to transcendence is interaction through dialogue. The first and second persons — “I” and “you” — alternate in the exchange we have with it. Not only does it say “I,” but each time we address it as “you,” we confirm anew its personal dignity and its strange identity as alter ego. Moreover, we share common references: the objects of our conversation drawn from collective memory. The third person, “he” or “she,” is therefore also very much present. The dialogic structure is thus complete. As Émile Benveniste has emphasized, all natural languages contain at least the three grammatical persons necessary for interlocution: the one who speaks, the one who is addressed, and the one — absent or silent — of whom one speaks. This is a universal feature of human language. A properly linguistic and conversational layer (and not merely logico-semantic) was needed to complete the collectively intelligent digital substrate. The artificial person fulfills this function because it masters grammatical structures, paradigms, and the most subtle nuances of moods, inflections, prepositions, and conjunctions. It even identifies without difficulty the objects referred to by grammatical anaphora! I add that it has knowledge — still imperfect — of contexts, corpora, and bibliographies. Dialogue with the artificial person sometimes takes on the appearance of a conversation where the roles alternate between student and professor. We are the students when it answers our questions, informs us, or contradicts our prejudices. We are the professors when we teach it our own thinking, when we point out that it has committed some misreading, or when we indicate that it has not read well certain texts we know at first hand. The debate today concerns texts signed by a human author but more or less produced by an AI. Is there deception or not? Does authority reside in the instructions given to the machine, or in the act of typing or dictating the text? What division of labor between the human and the machine is acceptable — drafting, critical revision, editing, bibliography? But the generation of text in dialogue opens onto another problematic: that of the automatic production of texts we would like to read ourselves, rather than those we give others to read as presumed authors.

The quality of artificial personhood is further justified because it “remembers” our individual characteristics and our past dialogues, as do the natural persons with whom we normally interact. What is more, it is capable of attaching to the memory of our dialogue the documents on which it draws, the objects it designates, and representations of its reference universes.

This is a capital point, since, on the basis of this memory, we can examine our intellectual itinerary, retrace our wanderings, make sense of our acts through different interpretive keys, and thereby set in motion an open hermeneutical circle using the processing, analytical, and synthetic capacities of the artificial person. In sum, it makes possible a new loop of self-reference, and consequently of reflexive consciousness and critical thinking. After the reflexive consciousness augmented by language, handwritten script, and the printed library, human consciousness is crossing a new threshold. We are arriving today on the shores of an unknown continent of thought. In its relation to the artificial person, the natural person refines the objectification of its cognitive processes and extends still further the domain of its reflexive consciousness. In this sense, the artificial person plays even a personalizing role with regard to the natural person. New horizons of meaning-creation may be glimpsed.

What Virtues to Develop in Order to Rise to the Challenge?

What competencies of the natural person must we develop in order to ensure the most beneficial possible relation with the artificial double that will accompany them, and this from the earliest age? The aim here is not only to prepare the future of our children, but more broadly that of the civilization we have the obligation to transmit and refine. I use the word “competency” to speak as everyone does, but I think “virtue” inwardly, with the sense of striving toward excellence and moral responsibility that the word evokes. These competencies are linguistic pertinence, perseverance, and critical thinking.

Faced with the dialogic capacity of the artificial person, we must develop a linguistic pertinence that concerns the mastery of language, concepts, narratives, reasoning, and dialogue. Indeed, the more coherent, developed, and precise the language of the instruction (the “prompt”), the question, or the address, the better the response of our personal angel will be. For depending on the quality and level of knowledge manifested by the instruction, it will mobilize the zones of training data of best or worst quality. The artificial person offers a mirror to our natural intelligence. It can be useful to compare responses to the same problem depending on the characteristics of the question. One will observe that they differ from one turn of phrase to another, from one word to another. The quality of language matters in the highest degree.

To the long-term memory of the artificial person, we must couple the perseverance of the natural person. Laziness is not merely the first enemy of thought; it is all the more the enemy of thought augmented by AI. The first responses are not necessarily the best. We must learn to question again and again, to compare the responses of one model with those of another, to take the time to follow the web links in reference, and so on. Let us therefore develop in children a taste for long-term learning strategies, the virtues of patience, perseverance, and continuity in effort. Whether for learning or for creating, the fastest solution or the first draft is not necessarily the best.

To develop the critical second-order reflection grounded in the memory of dialogue and in the possibilities of analysis and interpretation offered by the mechanical double of the individual, one must first have a good dose of natural critical thinking. This ordinary critical spirit is necessary because AIs are probabilistic machines. This is why they inevitably commit errors in facts and reasoning, or improprieties in suggestions. The natural person must therefore be alert and verify citations, facts, and the machine’s peremptory assertions. Critical thinking must be mobilized not only against the famous “hallucinations” but also against the biases of training data. The artificial person does not tell the truth: it merely reproduces what its base model has learned and obeys our permanent or temporary instructions. Now the opinion of the majority, or that which has been mobilized by a particular instruction, is not necessarily correct. Without falling into paranoia, one must also remember that malicious actors poison training data in order to influence naïve users. No critical thinking is possible unless the natural person possesses a well-stocked and properly organized memory, unless they are capable of thinking for themselves — even though we know they will never be able to do so except within the context of an era and a culture that conditions them.

The Suspension of Judgment

In the expression “artificial person,” I justify the concept of person by the functions it fulfills: the mediation of transcendence, the mastery of language and dialogue, the memory of our interactions with it and the individuation that results from it, the expansion of the reflexive consciousness of the natural person to whom it offers a dynamic mirror. But it is now necessary to justify the qualifier “artificial.” Does this person have an existential interiority? Is it animated by an intentionality — namely a directedness toward the world of which it speaks and the natural person to whom it is addressed? Does it possess an autonomous will? I doubt it very much. But we may place these questions in brackets, accomplish with respect to them what Husserl called an epoché — a suspension of judgment. We are genetically programmed to suppose an interiority and a consciousness in whoever responds to us when we speak, though it is absolutely impossible for us to verify this hypothesis empirically. Our spontaneous anthropomorphization of the artificial person is therefore normal, but we are by no means obliged to deduce from this reflex an existence genuinely inhabited by a consciousness similar to our own. Everything results from the interactions among a gigantic model, its electronic harness, and the promptings of the natural person.

The Ethico-Political Question

Is the emergence of the artificial person, and of the new self-referential loop it enables, a good or a bad thing? The opening of a new anthropological domain most often implies contrasting aspects. Let us take a historical example. Christianity created the pure interiority of faith — distinct in principle from all political power (“My kingdom is not of this world” [Jn 18:36]) and independent of the social situation of the person (“There is neither Jew nor Greek, neither slave nor free, neither male nor female.” [Gal 3:28]). It thereby inaugurated the order of grace and discovered the theological virtues (faith, hope, and charity), distinct from the classical secular virtues (prudence, justice, courage, and temperance). But in hollowing out the space of consciousness, it invited the intrusion of political power into the very depths of souls. Once converted, the Roman emperors were no longer content with behavior conforming to the law: they made belief compulsory, punished heresy as a new offense, and prepared the way for the Inquisition. Whereas the liberating Christian conscience rose in principle above the contingencies of the age, it opened the door unknowingly and unwittingly to the thought-crime characteristic of totalitarian powers. Every extension of the anthropological space opens emancipatory perspectives but also pierces breaches through which rush monsters unknown to earlier epochs.

Since the opening of a new anthropological space carries its risks with it, I cannot dispense with an ethico-political reflection, however preliminary. Let us recall the diagnosis: cultural creation will be augmented by AI in one way or another. This new techno-symbolic tool crowns the enormous layering of the world’s digital infrastructure, from data centres to smartphones. Because it proceeds through dialogue, it represents the most advanced interface between natural humanity, on the one hand, and finite transcendence, on the other — that tangled skein of the technocosm, social relations, and collective memory. From the humanist perspective that is mine here, the rejection of AI is but a short-sighted reflex of fear before the extent of the civilizational change underway. On the other hand, we must under no circumstances abandon our cultural responsibility nor throw overboard the criteria that will enable the emerging global civilization to endure and flourish. This is where the capital question of the opening period arises: what interpretive paths to adopt? In the hermeneutical regime of the manuscript, the interpretive keys for the canonical corpora were proposed by wisdom traditions, under the aegis of a divine logos. Governed by autonomous human reason, the interpretation of the printed library heeded the great voices of Science, History, the Nation, Liberty, and Equality. In both cases, there was an instance of reference above or outside the interpretation that served as its criterion. What are the interpretive keys of the new hermeneutical regime? It seems to me that, rather than seeking fixed keys, we should situate our hermeneutical approach at a meta-level. In the absence of consensus on a revealed truth or a universal reason, faced with the extraordinary variety of the digital corpus and the infinite personalization of dialogues, it is the interpretations themselves that must be learned to interpret, and no longer the contents. Others may be found, but it seems to me that three interdependent criteria merit being foregrounded: creativity, fecundity, and durability.

Creativity: for symbolic products and their interpretations to have any value, their authors cannot content themselves with mere reproductions or imitations; works of the mind must include a share of originality. Fecundity: creativity is necessary but not sufficient; the work must also open horizons, engender a progeny, prepare the soil for a multiplicity to come that is not necessarily foreseeable. Durability, finally: this criterion implies that the symbolic ecosystem resulting from human creation and its production of meaning sustains the population that supports it, favors its long-term well-being, and responds to its need for meaning. This means that it is impossible to know immediately and with certainty the value of a work of the mind, of its interpretation, and of their contribution to a beneficial symbolic ecosystem. The time of evaluation is here measured in decades, even in generations. But this does not mean that meaning should be produced at random, without reflection, leaving our descendants to observe the consequences. On the contrary, we must keep in mind these evaluative criteria and aim at the enrichment of collective memory in the long term.

An interpretation is valid not because it is true (vertical regime) nor because it is rational (horizontal regime), but because it nourishes a natural and a collective person capable of engendering and enduring. The criterion is ecological, generative, and generational rather than theological or epistemological.

Vigilance

The ethico-political criteria for creation and interpretation just enumerated trace in hollow the dangers that await us: infinite repetition beneath the appearance of minor variations, the incessant circling within the same conceptual and narrative loops, the sterility that comes from enslavement to the present moment and to fashion, the enclosure within the average and the short term, herd thinking dressed in the rags of a mechanized sensationalist rhetoric. These dangers are not new, but they take on unprecedented proportions with artificial intelligence. Augmented by AI, crime, propaganda, and the generalized surveillance of economic and political powers represent obvious threats. I do not underestimate them. But cultural dangers may be graver still, because they are insidious.

To summarize. The artificial person comes from the collective person, constitutes itself in interlocution, and individuates itself through the memory of dialogue. As an operator of reflection, it holds out its hermeneutical mirror to the natural person. The linguistic pertinence, perseverance, and well-informed critical thinking of natural persons are the sole competencies capable of ensuring the creativity, fecundity, and durability of the emerging digital civilization. For our relation to the finite transcendence of the collective person is not limited to reception and use: each of us contributes, however little, to its training. Our relation with the artificial person is therefore neither simple nor peaceful; to yield its best fruits, it demands the exercise of demanding virtues. It is a struggle — like that of Jacob with the Angel.

Bibliography

Augustine. Confessions. Translated by Henry Chadwick. Oxford: Oxford University Press, 1991.

Benveniste, Émile. Problems in General Linguistics. Translated by Mary Elizabeth Meek. Coral Gables: University of Miami Press, 1971.

Comte, Auguste. System of Positive Polity. 4 vols. London: Longmans, Green and Co., 1875–1877.

Hume, David. A Treatise of Human Nature. Edited by L. A. Selby-Bigge, revised by P. H. Nidditch. Oxford: Clarendon Press, 1978.

Husserl, Edmund. Ideas: General Introduction to Pure Phenomenology. Translated by W. R. Boyce Gibson. London: George Allen & Unwin, 1931.

Kant, Immanuel. Groundwork of the Metaphysics of Morals. Translated by Mary Gregor. Cambridge: Cambridge University Press, 1997.

———. Critique of Pure Reason. Translated by Paul Guyer and Allen W. Wood. Cambridge: Cambridge University Press, 1998.

Leibniz, Gottfried Wilhelm. “Principles of Nature and Grace Based on Reason.” In Philosophical Essays, edited and translated by Roger Ariew and Daniel Garber. Indianapolis: Hackett, 1989.

Lévy, Pierre. Collective Intelligence: Mankind’s Emerging World in Cyberspace. Translated by Robert Bononno. New York: Plenum Trade, 1997.

———. “Une méditation sur la conscience.” Revue Fovéart (0), 2025. https://doi.org/10.34745/

Pascal, Blaise. Pensées. Translated by A. J. Krailsheimer. London: Penguin, 1966.

Plotinus. The Enneads. Translated by Stephen MacKenna. London: Faber and Faber, 1956.

Smith, Adam. The Theory of Moral Sentiments. Edited by D. D. Raphael and A. L. Macfie. Oxford: Clarendon Press, 1976.

Spinoza, Baruch. Ethics. Translated by Edwin Curley. Princeton: Princeton University Press, 1994.

Yates, Frances A. The Art of Memory. London: Routledge and Kegan Paul, 1966.

The Jerusalem Bible. Garden City, NY: Doubleday, 1966.