INTRODUCTION

Almost 30 years ago now, I published a book dedicated to digital-based collective intelligence which was, modesty aside, the first to address this topic. In this work, I predicted that the Internet would become the main medium of communication, that it would bring about a change in civilization, and I said that the best use we could make of digital technologies was to enhance collective intelligence (and let me add: an emerging, ‘bottom-up’ type of collective intelligence).

At that time, less than 1% of humanity was connected to the Internet, while today – in 2023 – we have exceeded two-thirds of the world’s population being online. The change in civilization seems quite evident, although it is normally necessary to wait several generations to confirm this type of shift, not to mention that we are only at the beginning of the digital revolution. As for the enhancement of collective intelligence, many steps have been taken to make knowledge accessible to all (Wikipedia, open-source software, digitized libraries and museums, open-access scientific articles, certain aspects of social media, etc.). But much remains to be done. Using artificial intelligence to enhance collective intelligence seems a promising path, but how do we proceed in this direction? To answer this question rigorously, I will need to define a few concepts beforehand.

WHAT IS INTELLIGENCE?

Before even addressing the relationship between human collective intelligence and artificial intelligence, let’s try to define in a few words intelligence in general and human intelligence in particular. It is often said that intelligence is the ability to solve problems. To which I respond: yes, but it is also and above all the ability to conceive or construct problems. If one has a problem, it means one is trying to achieve a certain result and is faced with a difficulty or obstacle. In other words, there is a “Self”, which has its own internal logic, which must maintain within certain homeostatic limits, which has immanent goals such as reproduction, feeding, or development, and there is an « Other », an exteriority, which follows a different logic, which merges with or belongs to the environment of the Self and with which the Self must negotiate. The intelligent entity must have a certain autonomy, otherwise it would not be intelligent at all, but this autonomy is not self-sufficiency or absolute independence because, in that case, it would have no problems to solve and would not need to be intelligent. »

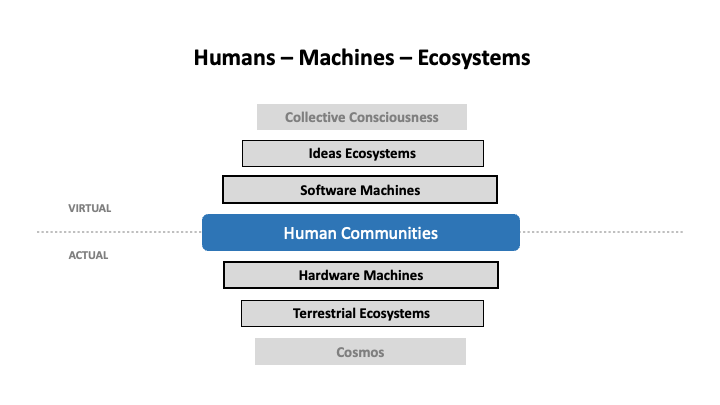

Figure 1

The relationship between the Self and the Other (Figure 1) can be reduced to communication or interaction between entities that are governed by different ways of being, codes, and heterogeneous purposes, thus imposing an uncertain and improvable process of encoding and decoding. This process inevitably generates losses, creations, and is subject to all kinds of noise and interference.

The intelligent entity is not necessarily an individual; it can be a society or an ecosystem. Moreover, upon analysis, one will often find in its place an ecosystem of molecules, cells, neurons, cognitive modules, and so on. As for the relationship between the Self and the Other, it constitutes the elementary mesh of any ecosystemic network. Intelligence is the trait of an ecosystem in relation with other ecosystems; it is collective by nature. In summary, the problem comes down to optimizing communication with a heterogeneous Other based on the purposes of the Self, and the solution is none other than the actual history of their relations.

INTELLIGENCE COMPLEXITY LAYERS

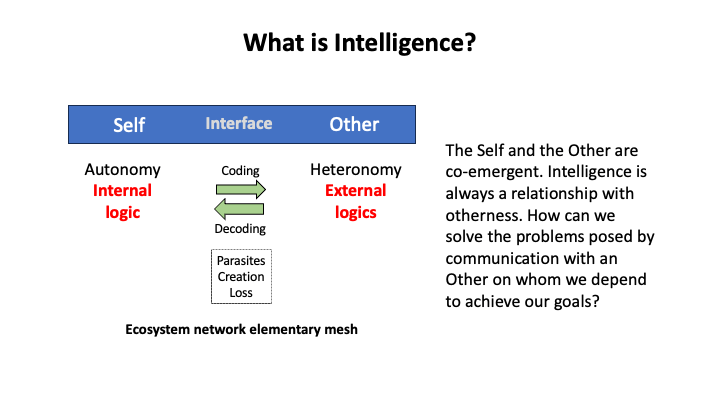

Our main focus is on human intelligence enhanced by digital technology. Let’s not forget, however, that our intelligence is based on layers of complexity that predate the appearance of the Homo species on Earth (Figure 2). The complexity layers of organic and animal intelligence are still active and indispensable to our own intelligence, since we are living beings with an organism and animals with a nervous system. That’s why human intelligence is always embodied and situated.

Figure 2

With organisms come the well-known properties of self-reproduction, self-reference and self-repair, based on molecular communication and no doubt also complex forms of electromagnetic communication. I won’t go into the subject of organic intelligence here. Suffice it to say that some researchers in biology and ecology now speak of » vegetal cognition « .

The development of the nervous system stems from the need for locomotion. First, the sensory-motor loop must be ensured. Over the course of evolution, this reflex loop became more complex, involving simulation of the environment, evaluation of the situation and decision-making calculations leading to action. Animal intelligence results from the folding of organic intelligence upon itself, as the nervous system maps and synthesizes what is happening in the organism, and controls it in return. Phenomenal experience is born of this reflection.

Indeed, the nervous system produces a phenomenal experience, or consciousness, which is characterized by intentionality, i.e. the fact of relating to something that is not necessarily the animal itself. Animal intelligence represents the Other. It is inhabited by multimodal sensory images (cenesthesia, touch, taste, smell, hearing, sight), pleasure and pain, emotions, the spatio-temporal framing essential to locomotion, the relationship to a territory, and an often complex social communication. Clearly, animals are capable of recognizing prey, predators or sexual partners and acting accordingly. This is only possible because neural circuits encode interaction patterns or concepts that orient, coordinate and give meaning to phenomenal experience.

HUMAN INTELLIGENCE

I’ve just mentioned animal intelligence, which is based on the nervous system. How can we characterize human intelligence, supported by symbolic coding? The general categories, concepts and patterns of interaction that were simply encoded by neural circuits in animal intelligence are now also represented in phenomenal experience via symbolic systems, the most important of which is language (Figure 3). Meaningful images (speech, writing, visual representations, ritual gestures…) represent abstract concepts, and these concepts can be syntactically combined to form complex semantic architectures.

Figure 3

As a result, most dimensions of human phenomenal experience – including sensori-motricity, affectivity, spatio-temporality and memory – are projected onto symbolic systems and controlled in turn by symbolic thought. Human intelligence and consciousness are reflexive. Moreover, for symbolic thought to take shape, symbolic systems – which are always of social origin – must be internalized by individuals, becoming an integral part of their psyche and « hard-wired » into their nervous systems. As a result, symbolic communication directly engages human nervous systems. We can’t fail to understand what someone is saying if we know the language. And the effects on our emotions and mental representations are almost inevitable. We could also take the example of the psycho-physical and affective synchronization produced by music. This is why human social cohesion is at least as strong as that of eusocial animals like bees and ants.

Note that figure 3, like several figures that follow, evokes a partition and interdependence between the virtual and the actual. In 1995, I published a book on the virtual that was both a philosophical and anthropological meditation on the concept of virtuality, and an attempt to put this concept to work on contemporary objects. My philosophical thesis is simple: that which is only possible, but not realized, does not exist. By contrast, that which is virtual but not actualized does exist. The virtual, that which is potential, abstract, immaterial, informational or ideal, weighs on situations, conditions our choices, provokes effects and enters into a dialectic or interdependent relationship with the actual.

COLLECTIVE INTELLIGENCE ECOSYSTEMS

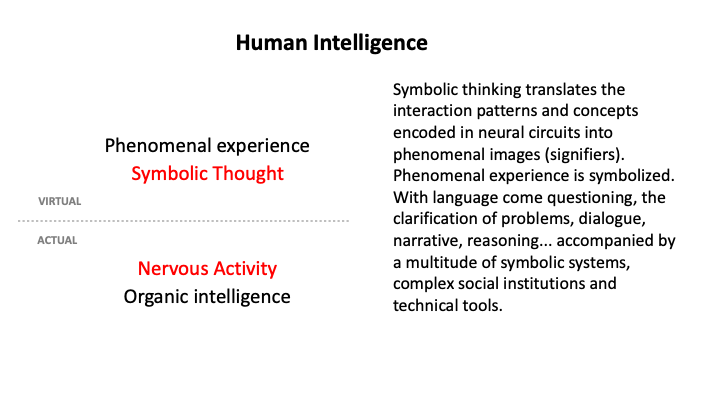

Figure 4 maps the main hubs of collective human intelligence or, if you prefer, the culture that comes with symbolic thinking. The diagram is organized by two intersecting symmetries. The first – binary – symmetry is that of the virtual and the actual. The actual is immersed in space and time, and is rather concrete, whereas the virtual is rather abstract and has no spatio-temporal address. The second – ternary – symmetry is that of sign, being and thing, inspired by the semiotic triangle. The thing is what the sign represents, and the being is the subject for whom the sign represents the thing. To the left (sign) stand symbolic systems, knowledge and communication; in the middle (being) stand subjectivity, ethics and society; to the right (thing) extend the capacity to do, the economy, technology and the physical dimension. It’s all about collective intelligence, because the six vertices of the hexagon are interdependent: the green lines (relationships) are as important, if not more so, than the points where they end.

Figure 4

This framework is valid for society in general, but also for any particular community. By the way, virtual, actual, sign, being and thing are (along with void) the semantic primitives of the IEML language (Information Economy MetaLanguage) that I invented and of which I’ll say a few words below.

The six vertices of the hexagon are not only the main fulcrums of human collective intelligence, they are also universes of problems to be solved:

- problems of knowledge creation and learning

- communication problems

- problems of legislation and ethics

- social and political problems

- economic problems

- technical, health and environmental problems.

How can we solve these problems?

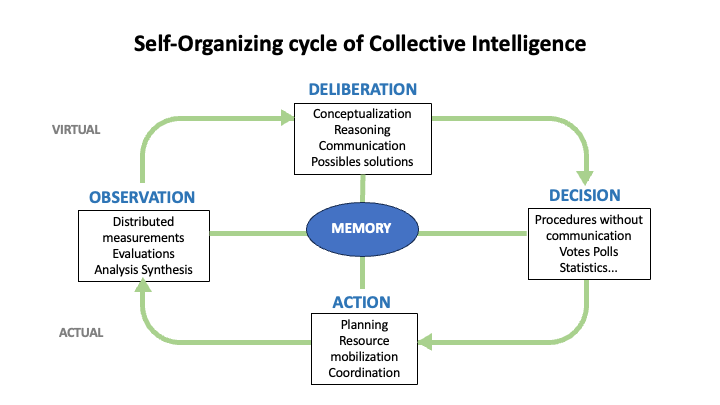

THE SELF-ORGANIZING CYCLE OF COLLECTIVE INTELLIGENCE

Figure 5 shows a four-stage problem-solving cycle. For each of the four phases of the cycle (deliberation, decision, action and observation), there are many different procedures, depending on the traditions and contexts in which collective intelligence operates. You’ll notice that deliberation represents the virtual phase of the cycle, while action represents the actual phase. In this model, decision is the transition from the virtual to the actual, while observation is the transition from the actual to the virtual. I’d like to emphasize two concepts here – deliberation and memory – which are often overlooked in this context.

Figure 5

Let’s begin by stressing the importance of deliberation, which involves not only discussing the best solutions for overcoming obstacles, but also constructing and conceptualizing problems collaboratively. This conceptualization phase will strongly influence and even define many of the subsequent phases, and will also determine the organization of the memory.

As you can see from the diagram in Figure 5, memory lies at the heart of the collective intelligence self-organization. Shared memory supports each phase of the cycle, helping to maintain the coordination, coherence and identity of collective intelligence. Indirect communication via a shared environment is one of the main mechanisms underpinning the collective intelligence of insect societies, known as stigmergic communication in the vocabulary of ethologists. But whereas insects generally leave pheromone traces in their physical environments to guide the actions of their fellow creatures, we leave symbolic traces not only in the landscape, but also in specialized memory devices such as archives, libraries and, today, databases. The problem of the future of digital memory lies before us: how can we design this memory in such a way that it is as useful as possible to our collective intelligence?

TOWARDS AN ARTIFICIAL INTELLIGENCE AT THE SERVICE OF COLLECTIVE INTELLIGENCE

Having acquired a few notions about intelligence in general, the foundations of human intelligence and the complexity of our collective intelligence, we can now ask ourselves about the relationship between our intelligence and machines.

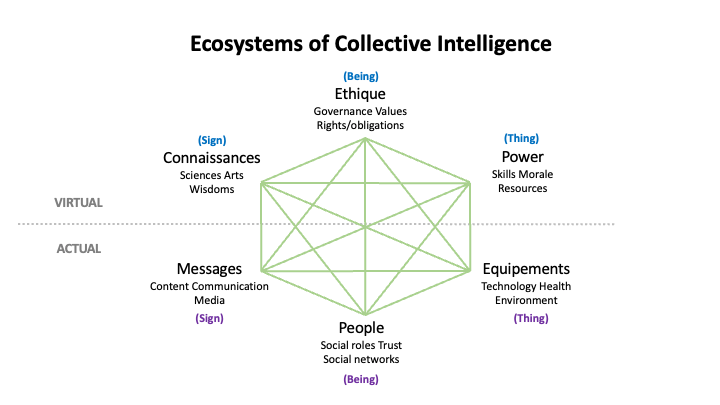

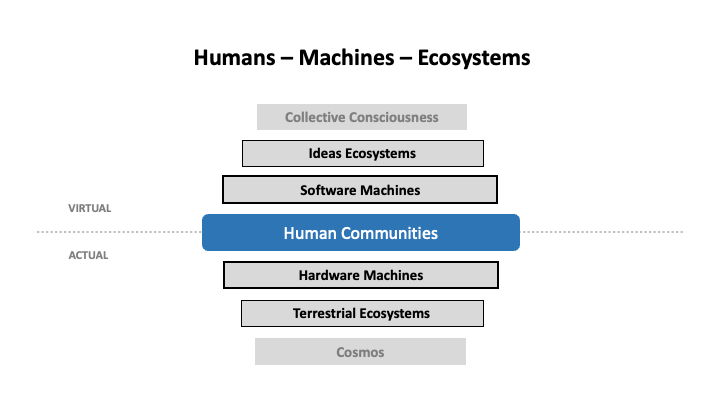

Figure 6

Figure 6 provides an overview of our situation. In the middle, the « living »: human populations, with the actual bodies and virtual minds of individuals. Immediately in contact with the individuals, the hardware machines (or mechanical bodies) on the actual side and, on the virtual side, the software machines (or mechanical minds). Hardware machines increasingly play the role of interface or medium between us and terrestrial ecosystems. As for software machines, they are becoming the main intermediary – a medium once again – between human populations and the ecosystems of ideas with which we live in symbiosis. As for collective consciousness, we’re not there yet. It’s more a horizon, a direction to aim for, than a reality. We need to understand Figure 6 by mentally adding feedback loops or interdependencies between adjacent layers, between the virtual and the actual, between the mechanical and the living. On an ethical level, we can assume that living human communities receive the benefits of terrestrial ecosystems and ecosystems of ideas in proportion to the work and care they put into maintaining them.

INTELLIGENCE AUTOMATION

Let’s zoom in on our mechanical environment with Figure 7. A machine is a technical device built by humans, an automaton that moves or operates « by itself ». Today, the two types of machine – software and hardware – are interdependent. They could not exist without each other, and are in principle controlled by human communities, whose physical and mental capacities they augment. Because technology externalizes, socializes and reifies human organic and psychic functions, it can sometimes appear autonomous or at risk of becoming autonomous, but this is an optical illusion. Behind « the machine » lies collective intelligence and the social relations it reifies and mobilizes.

Figure 7

Mechanical machines are those that transform motion, starting with the sail, the wheel, the pulley, the lever, gears, springs and so on. Examples of purely mechanical machines include water or windmills, classical clocks, Renaissance printing presses or the first weaving looms.

Energetic machines are those that transform energy into heat or electricity. Examples include furnaces, forges, steam engines, internal combustion engines, electric motors, and contemporary processes for generating, transmitting and storing electricity.

As for electronic machines, they control energy and matter at the level of electromagnetic fields and elementary particles, and very often serve to control the lower-layer machines on which they also depend. For our purposes here, these are mainly data centers (the « cloud »), networks and devices that are in direct contact with end-users (the « edge »), such as computers, telephones, games consoles, virtual reality headsets and the like.

Let’s take a look at the virtual part, which corresponds to the shared memory we put at the heart of our description of the self-organizing cycle of collective action. While point-to-point messages are still exchanged, most social communication now takes place stigmergically in digital memory. We communicate via the oceanic mass of data that brings us together. Every link we create, every tag or hashtag affixed to a piece of information, every act of evaluation or approval, every « like », every request, every purchase, every comment, every share – all these operations subtly modify the common memory, i.e. the inextricable magma of relationships between data. Our online behavior emits a continual flow of messages and clues that transform the structure of memory, help direct the attention and activity of our contemporaries, and drive artificial intelligence. But all this is happening today in a rather opaque way, which doesn’t do justice to the necessary phase of deliberation and conscious conceptualization that would be that of an ideal collective intelligence.

Above all, memory comprises the data that is produced, retrieved, explored and exploited by human activity. Human-machine interfaces represent the « front-end » without which nothing is possible. They directly determine what we call the user experience. Between interfaces and data, there are two main types of artificial intelligence models: neural models and symbolic models. We saw above that « natural » human intelligence is based on neural and symbolic coding. We find these two types of coding, or rather their electronic transposition, at the digital memory layer. It’s worth noting that these two approaches, neural and symbolic, already existed in the early days of AI, as early as the middle of the 20th century.

The neural models are trained on the multitude of digital data available, and they automatically extract patterns that no human programmer would have been able to work out. Conditioned by their training, the algorithms can then recognize and produce data corresponding to the learned patterns. But because they have abstracted structures rather than recording everything, they are now capable of correctly categorizing forms (image, text, music, code…) they have never encountered before, and producing an infinite number of new symbolic arrangements. This is why we speak of generative artificial intelligence. Neural AI synthesizes and mobilizes shared memory. Far from being autonomous, it extends and amplifies the collective intelligence that produced the data. What’s more, millions of users contribute to perfecting the models by asking them questions and commenting on the answers they receive. Take Midjourney, for example, whose users exchange prompts and constantly improve their AI skills. Today, Midjourney’s Discord servers are the most populous on the planet, with over a million users. A similar phenomenon is beginning to unfold around DALLE 3. A new stigmergic collective intelligence is emerging from the fusion of social media, AI and creator communities. These are examples of conscious contributions of collective human intelligence to artificial intelligence systems.

Many generalist pre-trained models are open-source, and several methods are now being used to refine or adjust them to particular contexts, whether based on elaborate prompts, additional training with special data or by means of human feedback, or a combination of these methods. In short, we now have the first beginnings of a neural collective intelligence, which emerges from a statistical calculation on data. However, neural models, useful and practical as they may be, are unfortunately not reliable knowledge bases. They inevitably reflect common opinion and the biases inherent in the data. Because of their probabilistic nature, they are prone to all kinds of errors. Finally, they don’t know how to justify their results, and this opacity is not conducive to building confidence. Critical thinking is therefore more necessary than ever, especially if training data is increasingly produced by generative AI, creating a dangerous epistemological vicious circle.

Let’s turn now to symbolic models. We call them by various names: tag collections, classifications, ontologies, knowledge graphs or semantic networks. These models can be reduced to explicit concepts and equally explicit relationships between these concepts, including causal relationships. They allow data to be organized semantically according to the practical needs of user communities, and enable automatic reasoning. With this approach, we obtain reliable, explicable knowledge that is directly adapted to the intended use. Symbolic knowledge bases are wonderful ways of sharing knowledge and skills, and therefore excellent tools for collective intelligence. The problem is that ontologies or knowledge graphs are created « by hand ». Formal modeling of complex knowledge domains is difficult. The construction of these models is time-consuming for highly specialized experts and therefore costly. The productivity of this intellectual work is low. On the other hand, while there is interoperability at the level of file formats for semantic metadata (or classification systems), this interoperability does not exist at the semantic level of concepts, which compartmentalizes collective intelligence. Wikidata is used for encyclopedic applications, schema.org for websites, the CIDOC-CRM model for cultural institutions, and so on. There are hundreds of incompatible ontologies from one domain to another, and often even within the same domain.

For years, many researchers have been advocating the use of hybrid neuro-symbolic models, in order to benefit from the advantages of both approaches. My message is as follows. If we want to move towards a digitally-supported collective intelligence worthy of the name, and which keeps up with our contemporary technical possibilities, we need to:

1) Renew symbolic AI by increasing the productivity of formal modeling and decompartmentalizing semantic metadata.

2) Couple this renewed symbolic AI with neural AI, which is in full development.

3) Put this previously unseen hybrid AI at the service of collective intelligence.

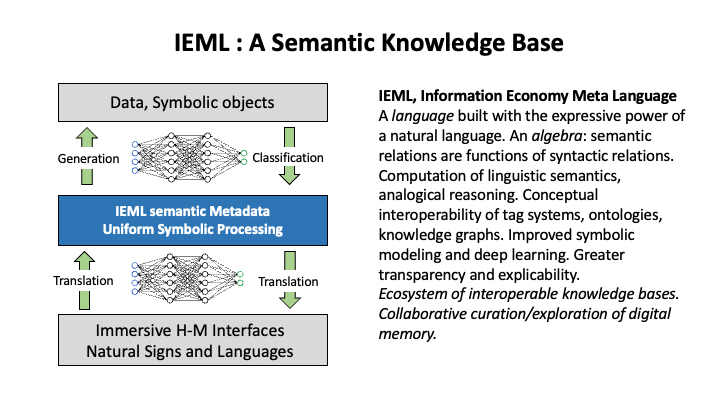

IEML : FOR A SEMANTIC KNOWLEDGE BASE

We have automated and pooled pattern recognition and automatic pattern generation, which is more neural in nature. How can we automate and pool conceptualization, which is more symbolic in nature? How can we bring together formal conceptualization by living humans and the pattern recognition that emerges from statistics?

Figure 8

Because our collective intelligence is increasingly based on a shared digital memory, I’ve been looking over the last thirty years for a semantic coordinate system for digital memory, a metadata system that would automate conceptualization operations and enable conceptual models to be shared.

The only thing that can generate all the concepts we want, while maintaining mutual understanding, is a language. But natural languages are irregular and ambiguous, and their semantics cannot be computed. So I built a language – IEML (Information Economy MetaLanguage) – whose internal semantic relations are functions of syntactic relations. IEML is both a language and an algebra. It is designed to facilitate and automate the construction of symbolic models as far as possible, while ensuring their semantic interoperability. In short, it’s a tool for automating and sharing conceptualization, with the vocation of serving as a universal semantic metadata system.

We can now answer our main question: how can we use artificial intelligence to increase collective intelligence? We need to imagine an ecosystem of semantic knowledge bases organized according to the architecture described in figure 8. As you can see, there are three layers between the human-machine interface and the data. In the center, the semantic metadata layer organizes the data on a symbolic level and, thanks to its algebraic structure, enables all kinds of uniform logical, analogical and semantic calculations. We know that symbolic modeling is difficult, and today’s ontology editors don’t make it easy. That’s why, under the metadata layer, I’m proposing to use a neural model to translate natural sign systems into IEML and vice versa, which would facilitate the most intuitive editing and inspection of semantic models. Between the metadata layer and the data layer, a neural model will enable the automatic generation of data from IEML prompts. In the opposite direction, the neural model would automatically classify the data and integrate it into the semantic model of the user community. Note that the algebraic properties of IEML are particularly aimed at perfecting machine learning.

The immersive human-machine interface using natural signs would enable anyone to collaborate in the conceptualization of models at the level of semantic metadata, and to generate the appropriate data by means of transparent prompts. Finally, this knowledge base would automate data categorization, exploitation and multimedia exploration.

Such an approach would enable each community to organize itself according to its own semantic model, while supporting the comparison and exchange of concepts and sub-models. In short, an ecosystem of semantic knowledge bases using IEML would simultaneously maximize (1) increased intellectual productivity through partial automation of conceptualization, (2) transparency of models and explicability of results, so important from an ethical point of view, (3) pooling of models and data thanks to a common semantic coordinate system, and (4) diversity and creative freedom, since the networks of concepts formulated in IEML can be differentiated and complexified at will. A fine program for collective intelligence. My wish is for a digital memory that will enable us to cultivate diverse and fertile ecosystems of ideas and reap the maximum benefits for human development.

SOME REFERENCES

Levy, Pierre. “Semantic Computing with IEML.” Collective Intelligence, 2023. 40 p.

https://journals.sagepub.com/doi/10.1177/26339137231207634

Lévy, Pierre. “Pour Un Changement de Paradigme en Intelligence Artificielle.” Giornale Di Filosofia 2, no. 2 (2021).

https://mimesisjournals.com/ojs/index.php/giornale-filosofia/article/view/1693

Lévy, Pierre. The Semantic Sphere. Computation, Cognition and Information Economy. Hoboken, NJ: Wiley, 2011.

Lévy, Pierre. “The IEML Research Program. From Social Computing to Reflexive Collective Intelligence.” Information Sciences 180, no. 1 (2010): 71–94.

Lévy, Pierre. Becoming Virtual. New York: Plenum Press, 1998. (1994 for the French edition)

Lévy, Pierre. Collective Intelligence. New York: Plenum Press, 1997. (1994 for the French edition)